The Next-Gen Research Stack: Agents, Experimentation, and Evaluation

Welcome to another edition of Think First: Perspectives on Research with AI. Last time we heard from Utpala Wandhare, who shared how she’s using AI to streamline research, strengthen cross-functional collaboration, and stay human-centered in an increasingly automated world.

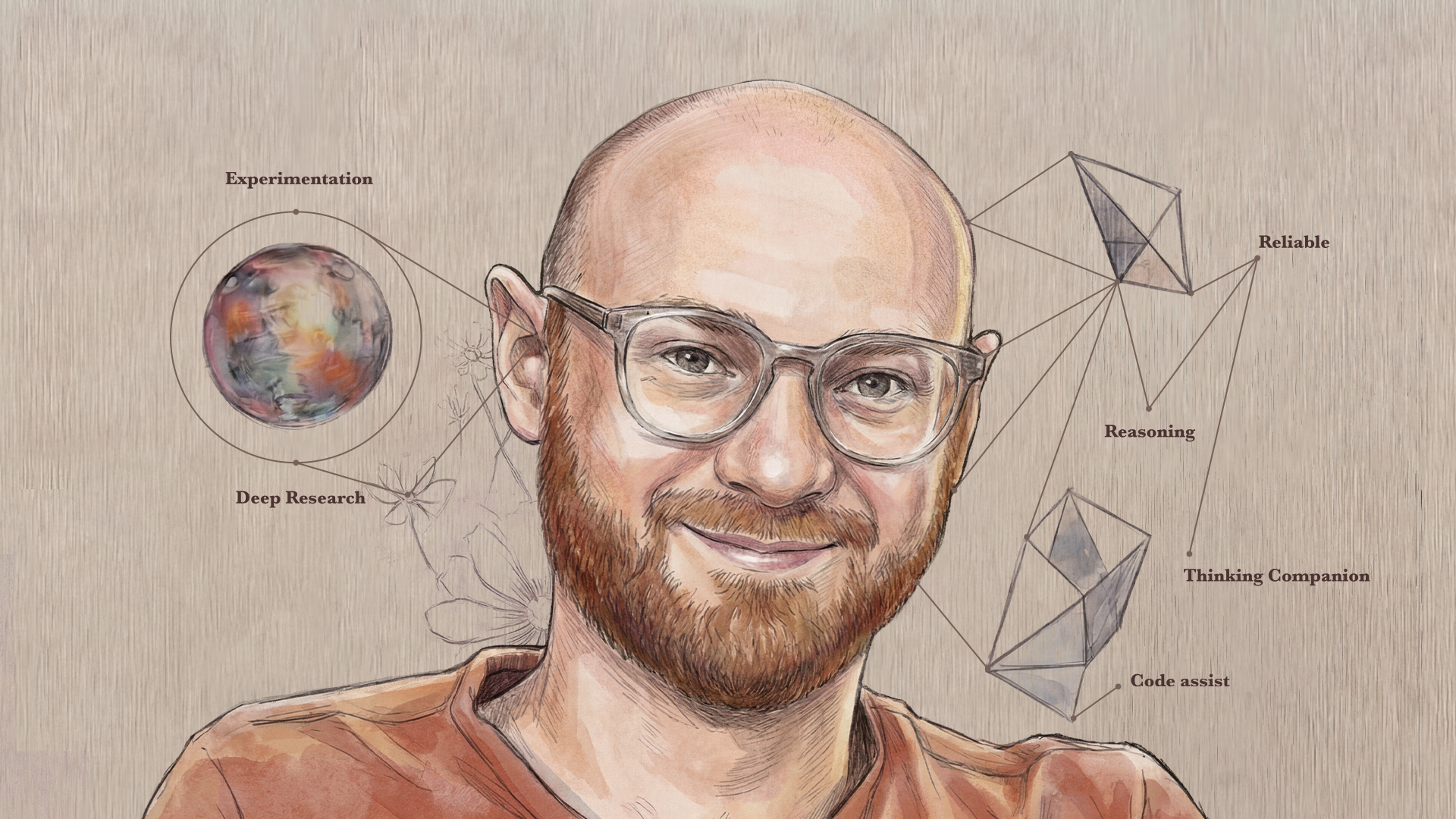

Today, we’re hearing from Zachary Bodnar, who leads UX Research for the AI portfolio at Red Hat — focused on supporting builders and operators who train, fine-tune, and deploy traditional and LLM-based models. With a career spanning data science, trust & safety, and next-generation AI products at companies like LinkedIn and Meta, Zack brings a systems-level view of how AI is reshaping not just research tasks—but research operating models.

In our conversation, Zack kept coming back to a simple idea: AI doesn’t just speed up tasks—it changes the shape of the system. The opportunity (and the challenge) is to redesign research workflows around experimentation, agentic tooling, and rigorous evaluation.

A lot of organizations are still in the ‘sprinkle AI on it’ phase. The real shift is redesigning the system around what’s now possible.

Zack’s AI origin story: From code assist to context engineering

Zack’s early AI “unlock” wasn’t abstract—it was practical. In the ChatGPT 3/3.5 era, he felt the impact most in quantitative work, where AI helped collapse turnaround time for analysis.

“I was using it for quant work—helping write Python to analyze stuff. It took a basic analysis from a day down to like half an hour. It was a huge unlock.”

But for a while, the value stayed mostly in tactical acceleration. The models weren’t yet strong enough at reasoning to reliably support more complex research work.

“For a while I had this ‘lower-boundary’ model in my head—like, there’s a floor for usefulness, and I wasn’t sure how far above that floor we could get.”

By early 2025, better model reasoning capabilities made the next question unavoidable: not just whether the model can reason, but whether it can reason with the right inputs. That’s where “context engineering” became central—getting the right information into the model at the right time.

“It stopped being ’can it reason?’ and became ‘can it reason about what matters?’ And that’s mostly a context problem.”

Why most AI experiments fail — and that’s OK

On experimentation, Zack pushed back on the narrative whiplash between hype and cynicism. In his view, a high “failure rate” is exactly what you should expect when you’re doing real discovery—and the organizations that win are the ones that build a learning loop that can survive it.

If you’re really experimenting, most things won’t work—and that’s not failure. That’s the process. The value is building the organizational tolerance — and the learning loop — to keep going until a few experiments deliver outsized returns.

Most serious experiments will be dead ends, and that’s expected. For Zack, what matters is whether you keep learning long enough to find the few things that truly change outcomes.

“If you ask people to list everything they tried—not just what worked—it’s usually five dead ends and one thing that’s amazing. You have to be OK throwing time at things that won’t work to find the couple that do.”

For research teams, the takeaway is uncomfortable but useful: if the organization can’t tolerate “wasted” cycles, it will either stop too early—or ship brittle solutions. Experimentation only works when learning is treated as an outcome, not a side effect.

Four phases of GenAI adoption

Zack described a practical adoption arc he’s observed across teams:

“There’s a path teams tend to follow: search replacement, task assistance, thinking companion—and then system redesign.”

- Search replacement: drop a query into AI instead of a search engine

- Task assistance: use AI for repeatable tasks like drafting, code generation, and planning

- General-purpose thinking companion: AI becomes an always-on collaborator, like the internet or a phone

- System redesign: re-architect work with the assumption that AI exists

It’s that third phase—AI as a general-purpose thinking companion—that creates an especially important shift for researchers: your potential scope expands. Zack described how researchers often want to do deeper desk research and internal/external synthesis—but historically those activities could take weeks. With AI, teams can compress that exploration into a day and arrive with a far more comprehensive starting point.

“The third phase changes your scope. Things that used to take weeks—desk research, a landscape scan—you can compress into a day and start with a much better ‘lay of the land.’ It’s not just that AI makes you faster. It makes a different level of rigor feasible under real constraints.”

Current practice: Agentic tooling for research reuse and research-informed PRDs

When we got concrete, Zack described two places he’s investing: (1) agentic tooling that makes research easier to find and reuse, and (2) ways to ensure research stays legible as more product work becomes AI-mediated.

1) A “deep research agent” for the research repository problem: Many organizations have hundreds (or thousands) of research reports—but still struggle with a familiar question: when someone has a question, how do they find the right prior evidence quickly?

“We have all this research, but when someone has a question, it’s still hard to find the right prior evidence quickly. That’s the repository problem.”

The ambition sounds simple: treat your research library like a living knowledge base—one place where anyone can query past studies and get an evidence-backed answer. In practice, that last mile is still where most tools fall short.

“I haven’t seen something that fully nails it yet—so we’re building our own. You ask a question, and the agent scans our research and tells you what we already know. The goal is broader access to learnings—without turning research into a black box.”

2) Research insights that are consumed by AI systems (not just humans): The second effort reflects a bigger shift Zack sees coming: as companies introduce AI agents into product development workflows, research must be legible not only to humans, but to AI systems.

“If AI systems are helping build PRDs and drive downstream work, research has to be legible to those systems—not just to humans reading a report.”

To make that point tangible, Zack reached for an example from industrial history: the biggest productivity gains didn’t come from adopting a new power source—they came later, once people redesigned the work around it.

“Early electrification was basically: replace the steam engine with an electric motor. The real transformation came when you reorganized the factory around what electricity made possible. ‘I’m a researcher with AI’ is like ‘I’m a factory with electricity.’ The question is: how does the workflow change when AI is assumed to exist?”

That’s why he’s skeptical of surface-level adoption. If the frame stays “I’m a researcher with AI” or “I’m a developer with AI,” you may miss the larger opportunity: redesigning workflows so research and productdecisions stay connected — even when AI agents enter the loop.

Defining quality first: Evaluation as the foundation for agentic research

For Zack, agentic tooling only helps if people can trust the outputs — and trust has to be earned with explicit evaluation.

“If you can’t measure quality, you can’t build trust. And if you can’t build trust, the agent doesn’t matter. Are we actually getting better? Is it reliable? Are citations accurate? Are the insights accurate?”

The starting point for Zack’s team was to define what “good” looks like and score against it — using standard and custom rubrics — then iterate as they uncover new failure modes in real use. They pair rubric scoring with internal user feedback, then update the rubric when they uncover new failure modes. The goal isn’t perfection — it’s a repeatable loop where quality is visible and improving over time.

Challenges, risks, and fundamental tensions

One of the hardest parts of adopting generative tools is that they force a tradeoff teams don’t like to name: speed wants momentum, while quality and risk management demand friction. You can’t optimize both at once—and most organizations end up trying anyway.

“Every AI product says, ‘This could be wrong’—and at the same time everyone wants rapid adoption. That tension is real. If you have to verify every single thing, it’s not actually faster. But if you don’t verify anything, you’re taking on risk.”

Zack called out two risk zones in particular:

1) Unpredictable usage in production: Even if you evaluate for the scenarios you expect, people will ask unexpected questions — and the agent’s performance may degrade outside of tested conditions. Zack described mitigating this with a careful, staged rollout: starting with researchers before scaling more broadly.

“We try to roll it out in rings: start with researchers — critical and kind — then design, then really pressure-test with product before scaling broader.”

2) Compounding error in AI-to-AI workflows: When an agent’s output feeds an AI-driven workflow (e.g., influencing a PRD assembled by other agents), even small issues—accurate but poorly phrased, subtly misleading, or incomplete—can create downstream effects that are hard for humans to catch.

“When an agent’s output becomes another agent’s input, small issues can compound in ways that are hard to catch. That’s the classic compounding error problem. Even if something is accurate, it might be phrased in a way that triggers a downstream chain—and there’s not a human that necessarily can catch it. The good news is it’s all digital—so we have telemetry and tracing, and we can put in guardrails.”

The upside, Zack noted, is that digital systems can support tracing and telemetry. Teams can implement guardrails and checkpoints, and design human-in-the-loop moments to prevent the system from going off the rails.

Looking ahead: Two “non-AI” skills Zack thinks will matter more

When asked where the field is headed, Zack intentionally stepped away from predicting specific model capabilities. He argues that LLMs are important, but not the whole story.

LLMs are important, but they’re a point-in-time technology in the generative space; maybe what’s next is diffusion models, maybe world models…I’m more interested in what stays valuable when AI is everywhere.

Instead, he offered two hunches about what will remain valuable as AI becomes ubiquitous:

1) The experience of being seen by another human:Zack believes there will be enduring value in interactions where people know a real person is present—whether that’s research participation or workplace collaboration.

“There’s something irreplaceable about being seen by another human. In a world full of AI attention, real human attention gets rarer—and more meaningful.”

2) Taste: Zack described “taste” as the human ability to discern quality—especially when the right answer is partly subjective, contextual, or grounded in hard-won domain expertise. He expects there will always be a place for people who deeply understand what good looks like in their field—both to guide decisions and to help AI systems improve.

“Taste is knowing what ‘good’ looks like. When the right answer is subjective or contextual, that judgment doesn’t go away. Is AI ever going to get good at discerning what is good art? I don’t know. If it has labels, sure. But there’s something about a person’s ability to tell what is good—what is really of high quality. And professionally, there’s going to be a place for people who really deeply understand what good looks like in their field.”

Advice for researchers: Know your adoption stage — and use AI as a force multiplier

Zack’s guidance for researchers trying to prepare for what’s next starts with self-awareness: understand where you fall on the adoption spectrum—search replacement, task assistance, or general-purpose collaboration—and give yourself both space and urgency to move forward.

“Know where you are on the adoption curve—and then be intentional about moving forward. Treat it like a set of junior collaborators—a junior copywriter, a junior coder, a junior researcher. You can direct it, but you still have to steer.”

Most importantly, he encouraged researchers to focus less on rigid role definitions (“what methods do I run?”) and more on how they create value. Like Josh Williams, Zack calls on researchers to think about how they show up as strategic thought partners, meet stakeholders where they are, and help teams move from problems to solutions.

Similar to Rose Beverly, Zack also suggested staying open to role blending as boundaries shift — because as AI becomes more capable, the expectation to “stay in your lane” may weaken, and the ability to translate research into broader strategic impact will matter more.

Finally, Zack returned to a human theme: don’t forget the people. Everyone is navigating a mix of excitement and fear, and the researchers who keep that reality at the center—whether with participants or colleagues—will continue to be essential.

“Don’t forget the people. Everyone’s carrying some mix of excitement and fear through this transition. People who keep that at the core of what they do will continue to be really critical and really important.”

A practical tip: Don’t ignore “coder tools”

To close, Zack offered a tactical recommendation: even if you don’t code, explore the AI tools built for developers (like Cursor, Claude Code, VS Code, or similar environments). Many of these tools effectively give you a “junior copywriter” workflow: outline, draft, highlight sections, iterate, and manage a task list — just applied to writing instead of code.

“Even if you don’t code, try the developer AI tools. They’re basically a great ‘junior copywriter’ workflow — outline, draft, highlight, iterate — just in a different interface.”

Like Savina Hawkins, Zack noted that some of the most capable AI writing workflows may show up first in “coding” products, and researchers can benefit by borrowing those patterns.

Final thoughts

Zack’s perspective reframes the AI conversation from “what tools should we adopt?” to “what systems should we redesign?” His emphasis on experimentation, evaluation, and staged rollouts offers a realistic blueprint for teams who want to move beyond hype — building agentic workflows that increase access to research, strengthen strategic alignment, and keep quality measurable as scale grows.

“The question isn’t ‘what tool should we adopt?’ It’s ‘what system are we rebuilding?’”

At the same time, his future-looking emphasis on being seen and having taste is a reminder: as AI becomes more present, the most human parts of research — judgment, discernment, and connection — may become even more valuable. These thoughts are echoed by researchers like Chelsey Fleming and Rodrigo Dalcin.

Zack also recommended the following resources for further reading:

- Frank Kanayet on the idea that a researcher can execute a host of methods in parallel using AI

- Yahav Manor driving work on Research Agents on Zack’s team at Red Hat

- Erik Brynjolfsson on the introduction of electricity to manufacturing processes

- Francis Fukuyama‘s writings on the importance of being seen by another person, in particular his book Identity: The Demand for Dignity and the Politics of Resentment

Next week, we’ll give you a behind the scenes view into the making of the “Think First” series. Tune in to learn more about the team behind the screen and what we have in store next.